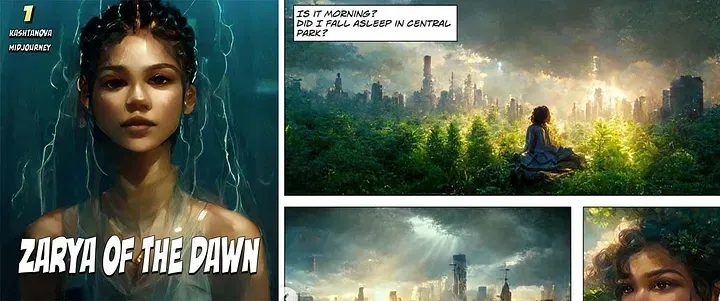

We received the decision today relative to Kristina Kashtanova's case about the comic book Zarya of the Dawn. Kris will keep the copyright registration, but it will be limited to the text and the whole work as a compilation.

In one sense this is a success, in that the registration is still valid and active. However, it is the most limited a copyright registration can be and it doesn't resolve the core questions about copyright in AI-assisted works. Those works may be copyrightable, but the USCO did not find them so in this case.

Nevertheless I am surprised at this result. In the USCO's recent filing in the Thaler case, the Office said that it was "preparing registration guidance for works generated by using AI." The question is whether the images Kris generated using Midjourney are copyrightable. If the Office is preparing guidance to allow registration of AI-assisted works, that strongly suggests that the USCO believes there is some threshold of human involvement that is sufficient to allow registration. The Office recognizes that Kris had input into the images that were created for Zarya of the Dawn, but it just doesn't seem to feel that the human input is sufficient.

The crux of the USCO's argument appears to be that the author has limited control over what picture is generated using Midjourney (or a similar tool). They recognize that the author has some control over what comes out of Midjourney but not enough: "the process is not controlled by the user because it is not possible to predict what Midjourney will create ahead of time" (p. 8) or "Rather than a tool that Ms. Kashtanova controlled and guided to reach her desired image, Midjourney generates images in an unpredictable way." (p. 9)

There are a number of errors with the Office's arguments, some legal and some factual. However, they all seem to stem from a core factual misunderstanding of the role that randomness plays in Midjourney's image generation.

The Office seems to think that the outputs from Midjourney are almost totally "random" and "unpredictable," so whatever the artist puts into Midjourney just doesn't matter. At most it's a "suggestion" that can be ignored.

First, that's not the right legal standard. The standard is whether there is a "modicum of creativity," not whether Kris could "predict what Midjourney [would] create ahead of time." In other words, the Office incorrectly focused on the output of the tool rather than the input from the human.

Jackson Pollack famously couldn't predict how the paint he used would drip onto the canvas. Pollack designed his paintings - he knew what he wanted the end result to be - but he used a process involving random dripping and flicking of paint to make his art. In music, each performance of John Cage's 4'33" is entirely defined by the random sounds that are made by the audience and the world around the stage.

In photography, the closest analogous art, there are famous photographs that captured animals, people, or humorous situations entirely by mistake. Nevertheless, the output is still copyrightable, because a human had at least a "modicum" of input into the shot.

When examined from the correct legal standard - did Kris provide a "modicum" of input - the Office's answer seems inconsistent. The Office recognizes that Kris personally authored the prompts and other inputs. It just doesn't think Kris did enough. But a "modicum" is the merest sliver of originality and creativity - "a very modest quantum of originality will suffice" ( 1 Nimmer on Copyright § 2.08). Courts have found that almost any decision that goes into a photograph will do - even decisions like which camera to use, choosing a brand of film, and taking several shots and picking one. Kris made exactly analogous decisions and had the additional element of the personally composed prompts instead of just a simple "button press" as would occur on a camera.

The second error is encapsulated in the Office's statement that as "Midjourney’s specific output cannot be predicted by users makes Midjourney different for copyright purposes than other tools used by artists." (p.9) The problem is that if this statement is construed narrowly enough to make it true, then it promulgates an incorrect standard. If this statement is construed more broadly, then it is factually false.

If the Office's statement is interpreted narrowly, it is true that the exact output is not predictable. However, having 100% control over the output is also not the correct standard. The standard is a modicum of creative input leading to the output.

If the Office's statement is interpreted broadly, it is factually false. Basic experimentation will show that when you type "cute purple dinosaur" into Midjourney, you get back images of a cute purple dinosaur, not a motorcycle or a cloud. Further, the more inputs given by the artist, the more control is exerted over the output. Again, not 100% control - but far more than the Office seems to understand.

Third, the Office seems to think we were making a "sweat of the brow"-type argument when we pointed out that generation of the pictures in Zarya of the Dawn took time. The Office apparently thought that Kris just hit the "generate" button a thousand times, hoping that the right picture would come up. If generation in Midjourney was random in the way the Office thinks, that might have been so. But the reality is much different.

Kris' creation of the images was selective and iterative. Kris would prompt for the generation of a first set of images. Kris would then select one of the first generation of images, and use that first-generation image and a tweaked prompt to create a second generation of images. Kris would then use a selected image from the second generation as part of the input to generate a third generation, and so on. The images in Zarya of the Dawn were evolved under close artistic direction. There may have been randomness inherent in the tool, but the images were nevertheless designed over many iterations to have a specific subject, lighting, content, layout, and feel. It should not matter that the subject, lighting, content, and layout were generated instead of captured.

Fourth, there seems to be a subtle factual error associated with how latent diffusion models work. The Office quotes from Midjourney's documentation describing diffusion models generally, and based on that reading, comes to the conclusion that "Because Midjourney starts with randomly generated noise that evolves into a final image, there is no guarantee that a particular prompt will generate any particular visual output." The Office compares the prompt to a mere"suggestion" that may or may not be followed by the tool. "

The subtle error comes in a misunderstanding about the "randomly generated noise." Midjourney technically has two layers: a semantic "latent" layer associated with meaning and a visual "pixel" layer associated with images. When a person inputs a prompt into Midjourney, the effect is to focus the attention of the tool on one or more specific spots in the latent domain, places that are statistically associated with particular meanings. The visual layer evolves the final image from the noise based upon the latent "meaning" in the prompt.

This is how the artist controls the outputs that come from Midjourney. The control is only approximate, not perfect. The widespread practice of "prompt engineering" is actually an exploration through latent space - a probablistic landscape of ideas and meanings - to match the expression to the artist's conception. The goal of the artist is to develop the exact set of inputs - images, words, and options - that will lead to the generation of the desired output.

So when the Office compares the prompt to a "suggestion" like a patron might give to a painter, it is anthropomorphizing the tool and coming to an invalid conclusion. Midjourney can't take "suggestions." It can only do exactly as it is programmed to do and pull from an artist-chosen place in its massive table of probabilities to drive the generation of an image.

I have come to the conclusion that that almost every work created by an AI tool should be copyrightable, even without the iterative refinement and post-processing that Kris performed. The more I search, the more I see similarities with photography and the long copyright battles over what minimum amount of creativity is needed to support the copyright in a photograph.

Photographs are difficult doctrinally for copyright because of the high amount of tool involvement. The USCO repeatedly quoted Burrow-Giles in its response, a case that turned on the creativity and artistry that the photographer showed in posing the subject. However, Burrow-Giles was not the last word. The Office didn't even quote Bleistein, which recognized that even prosaic photos had enough human input to be copyrightable.

The standard for copyrightability in photographs has been discussed several times, but the most common cite is from Judge Learned Hand in Jewelers' Circular Pub. Co. v. Keystone Pub. Co., 274 F. 932 (S.D.N.Y. 1921). He stated: "no photograph, however simple, can be unaffected by the personal influence of the author" ( id. at 34). In reviewing all the cases about photographic copyright, Nimmer on Copyright concluded that this "has become the prevailing view," and therefore "almost any[] photograph may claim the necessary originality to support a copyright merely by virtue of the photographer's personal choice of subject matter, angle of photograph, lighting, and determination of the precise time when the photograph is to be taken." 1 MELVIN B. NIMMER & DAVID NIMMER, NIMMER ON COPYRIGHT § 2.08[E][1], at 2-130 (2d ed. 1999).

AI-assisted art is going to need to be treated like photography. It is just a matter of time.